I've been picking on path replanning again. It is interestingly annoying problem. I have currently settled on following idea to make it somewhat manageable.

Prerequisite

In ever dynamic game world, the path finding and path following becomes statistic. The changes are that most of the time your request does not pan out the way they were requested. It is really important to have good failure recovery, both on movement system level as well as on behavior level. Plan for the worst, hope for the best.

Movement Request

At the core of the problem is movement request. Movement request tells the crowd/movement system that a higher level logic would like an agent to move to a specific location. This call happens asynchronously.

Path to Target

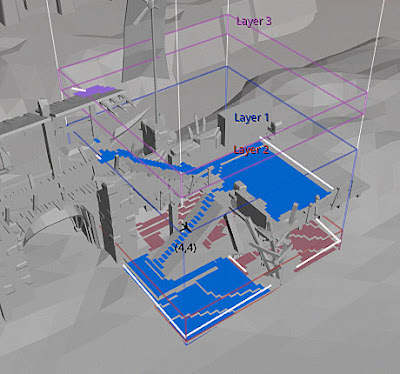

When the request is processed, the movement system will find the best path to the goal location. If something is blocking the path, partial path will be used and the caller is signaled about the result. From this point on, the system will do it's best to move the agent along this route to the best location that was just found.

Path Following

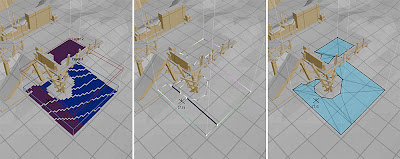

If a disturbance is found during the following, the movement system will do a small local search (same as the topology optimization) in order to fix the disturbance. If the disturbance cannot be solved, the system will signal that path is blocked and continue to move the agent up until to the valid location on the current path unless it is interrupted.

API

This could lead to following interface between the high level logic and the movement request.

struct MovementRequestCallback

{

// Called after path query is processed.

// (Path result accessible via ag->corridor)

// Return true if path result is accepted.

virtual bool result(Agent* ag) = 0;

// Called when path is blocked and

// cannot be fixed.

// Return true if movement should be continued.

virtual bool blocked(Agent* ag) = 0;

// Called when the agent has reached

// the end of the path trigger area.

// Return true if movement should be continued.

virtual bool done(Agent* ag) = 0;

};

bool dtCrowd::requestMovement(int agent,

const float* pos, const dtPolyRef ref,

MovementRequestCallback* cb);The 'blocked' function allows the higher level logic to handle more costly replans. For example the higher level logic can try to move to a location 3 times until it gives up and tries something else, and it can even react to replays if necessary.

The 'done' function allows to inject extra logic on what to do when path is finished. For example a 'follow enemy' behavior may want to keep on following the goal even if it is reached, whilst 'move to cover' might do a state transition to some other behavior when the movement is done.

The general idea is to move as much of the state handling behavior out from the general code, and try to make the replan to be as cheap as possible. The downside is that the replan cannot react to big changes in the game world, but I argue that that is not necessary and should be handled with higher level logic anyway (i.e. the path can be come much longer).

What do you think?