- Extending Obstacle Avoidance Methods through Multiple Parameter-Space Transformations

- Reactive Navigation of Multiple Moving Agents by Collaborative Resolution of Conflicts

- Cooperative Navigation Function based Navigation on Multiple Mobile Robots

- Multi Robotic Conflict Resolution by Cooperative Velocity and Direction Control

Space Transformations

Parameter space transformation feels like something that could be a good base for local avoidance using animation driven motion. The rough idea is that you have a parametrized trajectory, it could be an arc or two-point-turn or anything else, and you transform obstacles in the world to a space where the trajectory is one dimension and the parameter another.

My TOI sampling method basically does that using straight trajectories. Another notable velocity space local navigation method is Dynamic Window Approach.

Co-operation for Conflict Resolution

The good and the bad of many reactive obstacle avoidance methods are that they are local, egocentric. You don't need much information from other agents in order to avoid them. That was the good bit.

The hard thing is that it often results flickering solutions. A classic example is playing chicken, that is two agents move towards each other and they should avoid to not collide (similar case is also if a faster agent tries to overtake slower one). One potential solution is that both agents choose to avoid each other from the same side, on the next iteration 50 ms later they decide to avoid from the others. They will continue this until they eventually collide.

RVO, HRVO and many other methods have been proposed to solve the case. Krishna's and Hexmoor's work continue that saga by using additional graph structure to solve the conflicts. I'm still digesting Khrisna's and Hexmoor's solution, but I brought me an idea.

Reactive Navigation as Collision Response Problem

The first impression I got from the co-operative graph method was islands used in collision detection, then I remembered the different spaces where collisions can be detected and the next thing obvious thing was an idea combining the two and bringing aboard few things from collision detection.

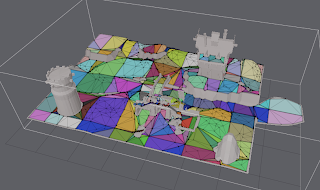

One way to look at the navigation problem is to think it in 3D space, where x,y and cartesian coordinates of an agent and z is time. In that space-time world, the agents become skewed cylinders.

Now that our aim is to create collision free paths in that space, we can do that simply by doing collision detection and response in that space. So I went and prototyped that and it kinda works pretty well in simple test cases. The result was boring 4 agents crossing each other and bumping into things (yay!) as can witnessed in the following picture.

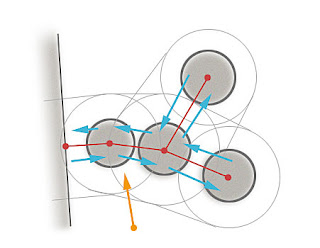

I used similar iterative method as you would use for verlet collision detection. For each pair (i,j) of agents, I calculate the nearest location along their velocities:

vab = ai.vel - aj.velAfter tha I proceed with the usually circle-circle collision response by moving them apart and that new resolved location is the new heading of the agent. Shake it for a dosen iterations and you have resolved your future collisions. When the agents are really close to each other the result is steering away from the other agent. This leads to some jags in the path (as can be seen in the picture too).

closestPt_PointRay(aj.pos, ai.pos, vab, t)

hiti = ai.pos + ai.vel*t

hitj = aj.pos + aj.vel*t

Ideally this method should also allow to include the time into the response equation. If an agent is moving into some direction that results nicely skewed cylinder, not if the agents location in the future is the same as current one, then the cylinder is not skewed at all. I have not quite figured out how to make the collisions to affect time, but maybe there is a good way to do that.